This chip firm has evolved from a litigious entity into an AI enabler

When talking about AI compute, what’s often discussed is the computational power of CPUs and GPUs leveraged in AI-related workloads.

However, what’s often sidestepped in mainstream discussions is the importance of memory interface chips which manage the flow of data that’s being transferred between processor and memory chips.

As AI’s bandwidth requirements have increased substantially, Rambus (RMBS), a firm that specializes in memory interface chips has evolved from a litigious patent-licensing company into an important, but underrated, player in the AI boom.

Investor Essentials Daily:

Wednesday News-based Update

Powered by Valens Research

The AI boom has triggered a historic memory chip shortage as hyperscalers have bought up supplies and entered into multiyear contracts to secure this crucial component needed in AI systems and the data centers that power them.

Memory chips are essential for storing and supplying the data utilized in computational workloads across consumer electronics (e.g., smartphones, gaming consoles, PCs, etc.) and more importantly, AI data centers.

With hyperscalers buying up available supply, the price of dynamic random access memory (“DRAM”) chips—which temporarily stores data that a CPU is actively utilizing—have skyrocketed to nearly 700%.

Meanwhile, prices for NAND, a form of non-volatile flash storage used for long-term storage found in most solid state drives (“SSDs”), have risen by 600%.

With memory demand rising dramatically, suppliers such as SK Hynix, Micron, and Samsung have benefited financially as they are the world’s biggest and most important memory chipmakers.

However, these firms aren’t the only players in the semiconductor space who have benefited—and are positioned to keep benefitting—from the AI-fueled data center buildout.

Enter Rambus (RMBS), a technology firm that specializes in and designs chip interface technologies and architectures used in both consumer and enterprise electronics.

Founded in 1990, the company is known for inventing Rambus DRAM (“RDRAM”) and its successors during the 1990s until the early 2000s. It also owns various patents for technologies related to chip interfaces which it licenses to customers.

Rambus has a history of being a litigious patent-licensing entity due to its many patent disputes with chip makers like Samsung, Micron, and SK Hynix.

The firm has since moved on from its litigious past to a high-growth company owing to its product stack that’s crucial to high-performance computing and recently, AI workloads.

The computational power of CPUs and GPUs often dominates the narrative when talking about computing in general and AI compute in particular. However, what’s often ignored is the bottleneck that occurs when processor speeds far outpace memory bandwidth, known as the “memory wall.”

To ensure high-speed traffic data traffic is managed properly, memory interface chips are required to operate alongside high performance chips and memory chips.

And that’s where Rambus comes in. Its portfolio of memory interface chips include DDR5 RDIMM and MRDIMM chipsets are currently used in data center applications. It also offers chips used in consumer electronics.

The company has a 45% share of the DDR5 registering clock drivers (“RCDs”) market as of the end of 2025.

Aside from memory interface chips, the company offers Silicon IP solutions which are grouped under Interface IP (for PCIe, HBM, GDDR, and DDR standards) and Security IP (for data center and IoT use).

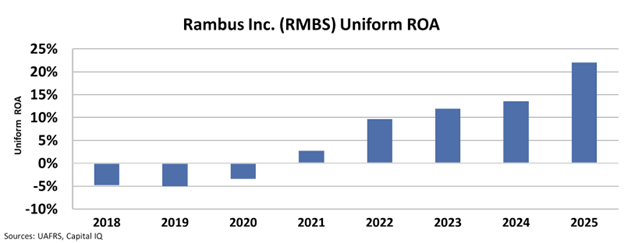

By leveraging its product stack and collecting licensing fees from its patents and other related technologies, the company has improved its returns over the past few years

After generating negative returns from 2018 to 2020, Rambus’ Uniform return on assets (“ROA”) inflected positively in 2021, delivering 3% in that year.

From there, Rambus’ returns grew quickly as the AI boom rolled around, seeing its Uniform ROA more than double from 10% in 2022 to 22% in 2025.

Rambus is poised to keep growing in the next few years as bandwidth requirements for AI infrastructure continue to grow. And as long as hyperscalers continue to build and operate AI data centers, it will continue to play a crucial role, fueling higher returns.

Best regards,

Joel Litman & Rob Spivey

Chief Investment Officer &

Director of Research

at Valens Research